Kubernetes (K8s) is a portable, extensible, open-source platform for managing containerized workloads and services, that facilitates both declarative configuration and automation. It has a large, rapidly growing ecosystem. Kubernetes services, support, and tools are widely available. Source: https://kubernetes.io

K8s take very good care of the applications that run on top it, but the infrastructure is on you!

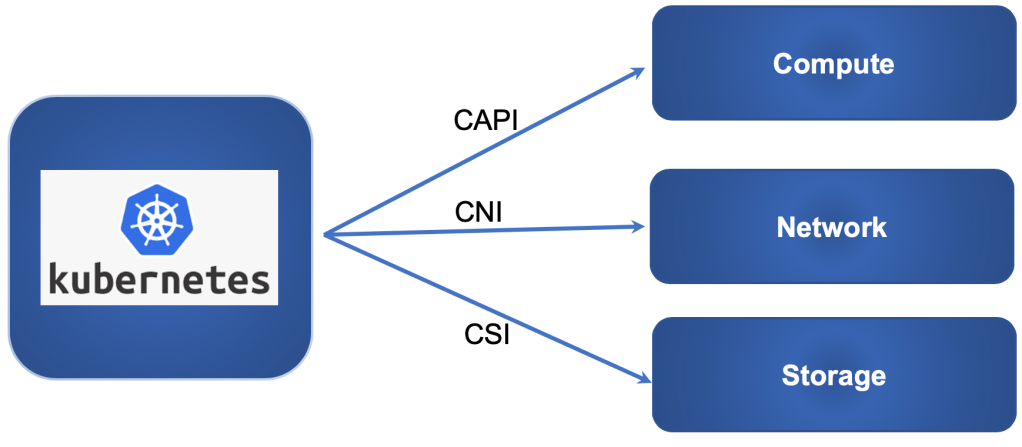

We should put in mind that K8s is an orchestrator that needs an infrastructure to orchestrate, and that infrastructure needs to understand K8s constructs to provision those constructs whenever a YAML file applied. For example, when you deploy a service type “LoadBalancer” or “Ingress” in K8s, the network needs to react to that and provision a load balancer based on the configuration stated in that YAML file. More than that the network needs to understand K8s “Network Policy” to apply those roles in the infrastructure layer. Same goes for storage, we need to have the ability to map a storage volume to a K8s “Storage Class” so we can use “Persistent Volume Claims”. And finally, for Compute, it needs to have a Cluster API (CAPI) provider to have the ability to provision K8s Clusters on demand and perform Cluster Life Cycle Management.

One of the main advantages of K8s is its extensibility and modularity. It can interface and talk to Compute using Cluster API (CAPI), to Network using Container Network Interface (CNI) and to storage using Container Storage Interface (CSI).

These integrations are typically hidden from the user in the public cloud when getting K8s-as-a-service, but what about on-prem? Can we get the same level of abstractions? Yes, we can with vSphere with Kubernetes on VMware Cloud Foundation, but lets talk about that later.

What infrastructure should I use to run K8s on-prem?

What we recommend is to avoid building an infrastructure silo inside your data center just for containers and instead modernize your IT infrastructure to cater for all kind of workloads whether they are containers or virtual machines. This is especially important given that most modern enterprise applications continue to run on VMs and many are a hybrid between the two.

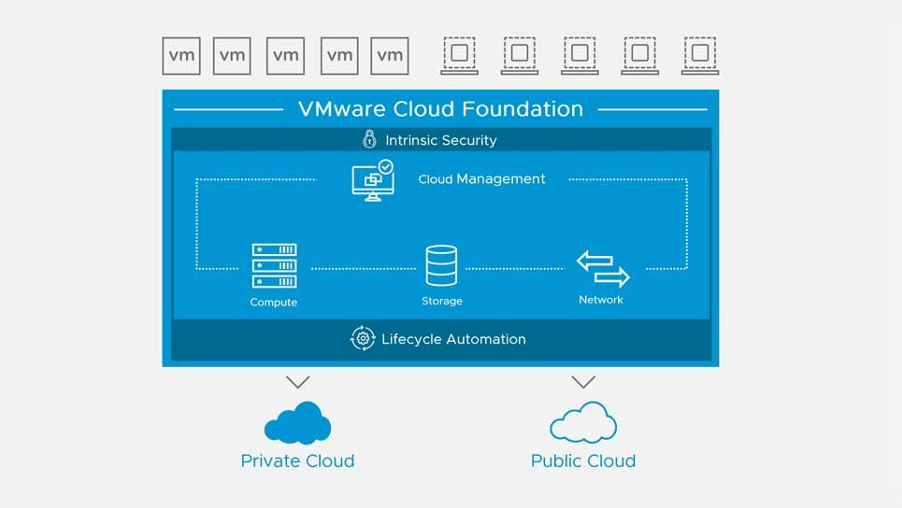

Another point we need to put in mind that this infrastructure needs not only to provide a consistent operation model for containers and VMs, but even across private and public clouds.

That takes me to the VMware Software Defined Data Center (SDDC), it provides all the infrastructure components that K8s needs for both on-prem and public cloud. It supports CAPI, CNI, and CSI with unique advantages in each of them, but more importantly, it provides an end-to-end solution for K8s infrastructure with best of breed components like vSphere, NSX, and VSAN. And let us not forget the Cloud Management Capabilities with vRealize suit.

So what is the easiest and quickest way to deploy VMware SDDC?

VMware Cloud Foundation (VCF) is the quickest and easiest path to the SDDC. VCF provides an integrated stack which bundles Compute Virtualization (vSphere), Storage Virtualization (vSAN), Network Virtualization (NSX) and Cloud Management and Monitoring (vRealize Suite) into a single platform which can be deployed on-premises or can also run As-a-Service in public cloud. VCF automate the deployment and provide LCM for all the SDDC components.

My infrastructure is ready. what is the easiest way to deploy K8s on the SDDC? could I provide K8s as a service for my Dev/DevOps?

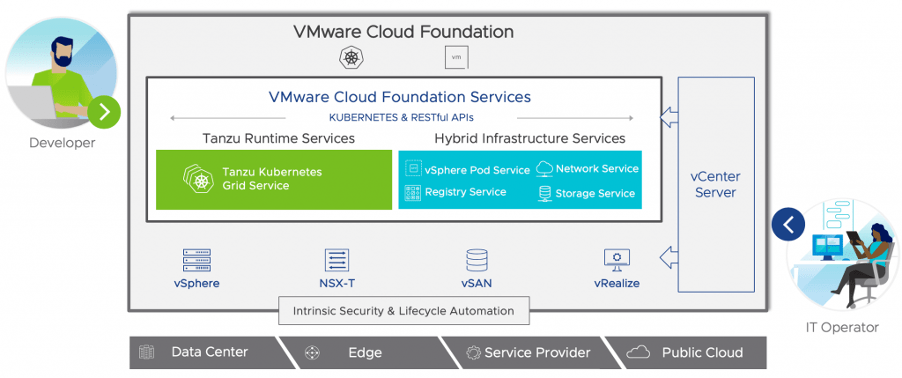

The good news that starting from VCF 4.0, the SDDC now comes with an integrated K8s solution known as vSphere 7 with Kubernetes. It is embedded K8s inside vSphere 7 that transforms vSphere Clusters into K8s clusters. vSphere 7 with K8s is a revolutionary solution because it makes K8s on-prem just as easy as public cloud.

With vSphere 7 with Kubernetes, we implement a K8s control plane on the vSphere Cluster and then access it using K8s API. We call it the Supervisor Cluster. Using the Supervisor Cluster we can provision and operate VMs and Containers together on a single vSphere cluster.

Within the Supervisor Cluster we automate Network Services using CNI with NSX, and Storage services using CSI with vSAN.

We even enable registry service from vCenter with Harbor to have a repository for container images and Helm charts.

More than that, we provide K8s-as-a-service for Dev and DevOps using the CAPI in the Supervisor Cluster. We call those clusters Tanzu Kubernetes Grid (TKG) Clusters. In that architecture the Supervisor Cluster will act as a CAPI Management Cluster used to bootstrap the TKG clusters.

VMware SDDC is an ideal place place to run K8s, and today it is easier than ever to go from 0-to-K8s using VCF 4.0.

Thank you for reading!